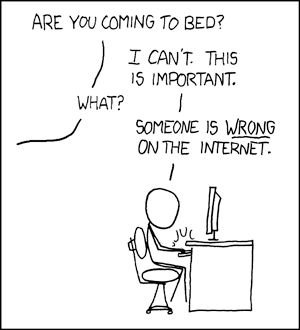

Somebody is WRONG about the Internet

Net neutrality is probably the most worderfully boring topic, and ranting, the most practiced online activity. Let's mix the two!

I know this blog is mainly about technical stuff and this isn't really a technical topic, but a recent event forced me to realise this is a topic I cannot avoid. Somebody is indeed wrong about the Internet.

More specifically, it's this comment that sprung my interest (who said ire?). With net neutrality becoming yet again a hot topic (it's gonna be repealed, remember…), and bots being used to promote its repeal, a pattern emerges in the opposition to net neutrality lead by ISPs.

Uncertainty and Doubt §

Bots and human promoters alike spread the same message, however wrong their argumentation might be. The correctness of the arguments nor the facts they are based on matter anymore. It's all about being seen, even on small blogs like the one I linked at above. When one comes to read the comments and sees a discording voice, one thinks their is still much to be debated and no clear answer has still emerged. Much like headlines that tell more than their article's content, they often bias the reader. I'm not advocating for censorship of discording voices, but calling for a more constructed discussion, based on facts and understanding of one's motive and arguments.

Debate is our friend §

They masquerade as commentors but don't actually feed the debate. By argumenting more and providing clear outlooks of their failing arguments (or lack thereof), we provide an anchor for readers.

Debate is also a nice way to construct your own future material to post. In terms of impact, an article is always more important than a comment. Hence this post.

Why is he wrong? §

Nothing personal intended, his comment is just a good summary of ideas that have woefully spread a lot about net neutrality, yet are wrong in every sense. Beware, I'm gonna quote the shit out of my answers.

"Companies have created Internet"

Nope.

The history of the Internet begins with the development of electronic computers in the 1950s. Initial concepts of wide area networking originated in several computer science laboratories in the United States, United Kingdom, and France. The US Department of Defense awarded contracts as early as the 1960s, including for the development of the ARPANET project, directed by Robert Taylor and managed by Lawrence Roberts. The first message was sent over the ARPANET in 1969 from computer science Professor Leonard Kleinrock's laboratory at University of California, Los Angeles (UCLA) to the second network node at Stanford Research Institute (SRI). Donald Davies first demonstrated packet switching in 1967 at the National Physics Laboratory (NPL) in the UK.

Public funding, public research institiutes. But what about TCP/IP?

The Internet protocol suite (TCP/IP) was developed by Robert E. Kahn and Vint Cerf in the 1970s and became the standard networking protocol on the ARPANET, incorporating concepts from the French CYCLADES project directed by Louis Pouzin. In the early 1980s the NSF funded and finally provided interconnectivity in 1986 with the NSFNET project.

Still public. What about the web?

In the 1980s, research at CERN in Switzerland by British computer scientist Tim Berners-Lee resulted in the World Wide Web, linking hypertext documents into an information system, accessible from any node on the network.

Puuuuuublic.

Commercial Internet service providers (ISPs) began to emerge in the very late 1980s. Limited private connections to parts of the Internet by officially commercial entities emerged in several American cities by late 1989 and 1990, and the NSFNET was decommissioned in 1995, removing the last restrictions on the use of the Internet to carry commercial traffic.

It feels like the ISPs are late to the party. Some would say they are mere laggards.

But maybe you are talking about the network cables? If that is the case, then know that the actual infrastructure was mainly relying on copper connections already dedicated to telephone networks. The connection to the last mile?

Much of that network was built by the state, and private ISPs don't always deliver, even in big cities. Again it's very logical. You only build where you can make profit. Nothing wrong with that. It's not being anti-companies and anti-state to chose one or the other to build the last-mile network, but the ultimate goal of all this fuss is to have a better network for the masses, not choose based on your political spectrum.

Questions yet to be answered:

- What is the compared cost of 95% coverage in (insert desired bandwidth) for public/private deployment.

"Net Neutrality has never existed" §

And even if it has never been lawfully enforced, it still is something important as more and more of our learning, social and political opportunities require it. (more on that below)

"Internet is broken" §

I know, I know. Internet is broken because our usages have changed and our craving for more videos, more series, more streaming, more torrent and more (insert blame on the user) is killing it.

It is true we use the Internet differently from a few years back, and it will certainly continue to change. Strangely, the blame is always directed towards the user and not the ones that maintain the network. More strange even is the lack of blame for refusing to develop technologies that would have helped adapt. For instance, a lot has been done towards distributed content delivery (BitTorrent and others before), both academically and in public research privately funded. There is interest, but no ISP has been pushing for such technologies. They see the infrastructure as a mere convenience and don't aim at really improving how it works upstream.

A corollary is the fact some use disproportionately the network compared to others: the main culprits are GAFAMs and globally a business model based on technologies that require client-server applications (central video servers like YouTube), but the point is often used to justify tiered services (you pay per site or pack of sites/protocols you want to access). A quick fix would be to use modern technologies (decentralized content delivery, dynamic caching in the network), but even though I'm a strong beliver in solving problems with technology I also trust politics in the use of networks to be able to reach a more precise goal (yes, politics are everywhere, just look at all the deciding boards that govern the standards and the resources of the Internet like addresses and domain names!).

But okay, why not. Let's assume this is the only way to fix the Internet. Even then, with Internet access like for insurances and social security, you don't want to make people pay only for what they need because metering the internet is a constantly changing medium (understand, waaaay more than any other) and it is very difficult to meter its usage. Even in peering agreements they meter only the used bandwidth and not the sites visited or the protocols used, because it would be a mess to keep track of ever changing addresses and workarounds. There is no optimisation possible, no invisible hand that will make things converge.

"Not everyone needs all Internet" §

Of course I don't connect to the entire Internet at once. But what do you need? What do I need? How do you rate the cost of each need? If I invent a new protocol to connect to those sites on the network, how do you rate it? What if I use a VPN?

Is all of that metering really getting to make everyone have a better service? A fairer service?

"Some people need a faster/more reliable Internet line…" §

And they already pay for it. That's why we deploy in priority FTTH or redundant copper wires to companies and buildings that need this extra service.

"…and for that we need to slow other people"

Don't fall into this. Link saturation because of home users is not a thing.

"…and look at IoT: we need to finance that fancy tech"

IoT is mainly a company need financed by corporate clients. Even home users are paying in a way, as 5G eNBs (the base stations) are already supporting most IoT use cases in their specification, and we are gonna pay for them the same way we are paying for the network right now.

"…and reliability for chirurigical operations in mobility"

Don't laugh, I've already heard a lot of people from Orange say that as a future need that requires heavy investment. It is even told in class to our future network engineers.

Look, there is no need for such things right now or even in ten years. If you take this argument seriously as I do and try to imagine, you can imagine doctors doing consultations remotely from their office (then it is a fiber connection with QoS) or at the very limit a chirurgical operation via 4G (fixed allocation of RBs already exist and are even used for home connections via 3G/4G) even though the delay will only become reasonable with 5G (<5ms instead of <100ms in 4G). But then 5G will already be financed by home users, so, you know, QED.

"Poor people don't need the luxury of all Internet, and could pay less with specific offers" §

If you want poor people to pay less for internet, you should be looking at political measures to lower the cost altogether or pay them internet access. Either way, you're trying to implement social services the wrong way by giving a pay per use that is difficult (impossible?) to define: what's a luxurious usage of Internet? Even more difficult to define: what is the Internet poor people need and how is it using less the network than rich people.

Actually, poor people need the Internet more than rich people. They might use the network more. Why? Maybe because their only way to learn is via MOOCs, videos or online debates. Maybe because it's their only way to access an otherwise unafordable higher education. Call them lazy not to go to classes or work to pay their school, learning on the Internet is always gonna require multiple sources and formats to adapt each and everyone. It's also part of the problem with traditional education mediums: having one professor giving his knowledge is a one-way process that is outdated and potentially unefficient. It also calls to a hierarchy that is not needed when one learns on the Internet. On the Internet there are resources and you go fetch them and absord them. You are your own teacher. This comes with disadvantages too. It's more time-consuming to look for something and summarize for proper assimilation, but when you're poor, you sometimes don't have the choice and Internet is your only resource. So you browse a lot of information on various sites, look at videos, just to try to grasp what a rich would have learned in a classes or two at school.

There is a huge social difference, and while I don't think of Internet as an equalizer by any means, it certainly can increase the social gap caused by wealth gaps.