Going further with my server and securing docker

Ansible deployment? I just discovered "infrastructure as code". And while it takes time, it is very maintainable. Some problems arise though: Docker security with AppArmor? Dreadfully complicated, unless you have some automatic tools.

You might want to jump to the part dedicated to securing docker, the first part being only a review of my VPS's new setup.

Standing after the crash §

Previous rebuilds of my VPS were due to misconfigurations of my ssh servers, errors that can be easily avoided. I learned to do application back-ups. But when the disk of my server crashed last month, I had enough of rebuilding everything by hand and decided to store my configuration and automate it.

Stateful configuration with Ansible §

Having to place each configuration file, install each app by hand in a terminal to my VPS is a long and cubbersome task. Ansible helped a lot by automating everything in the sanest manner: it handles the ssh connection to potentially many servers and guarantees tasks gets executed with stateful results. It forces me to write tasks dedicated to copy the configuration files, tasks dedicated to command lines I would otherwise do manually, but it does so in an organised manner and provides state information. This way you can lanuch all the tasks in one ansible command and be sure your server is ready.

---

- hosts: nebuleuse # a group of computers I want to target with the tasks below

roles:

- role: 'geerlingguy.security'

- role: 'rigelk.minimal-packages'

- role: 'rigelk.create-new-user'

- role: 'rigelk.vimrc'

- role: 'mikegleasonjr.firewall'

- role: 'rigelk.docker'

- role: 'franklinkim.docker-compose'

- role: 'rigelk.compose'The last task, rigelk.compose spawns a simple command (I don't use the Ansible module to keep it easily modifyable for now): docker-compose up -d. It launches containers for most of the apps I use on my container. Of course it is a shortcut compared to installing everything bare metal (even through Ansible).

But it also gives a way to keep track of configuration in an ordered mannen. Plus, multiple roles have already been written by the community around Ansible-Galaxy. It doesn't solve every problem but it sure gives a basis to solve yours.

Docker-compose for now §

For now. There seems to be quite a number of ways to launch docker containers at scale. Docker-compose is the one for local scale (local orchestration), and since I only have one target server for now, docker-compose, version 2 is enough for my use. Version 3 seems to be the official way to scale to other machines, but the again I can look at more sophisticated tools like Rancher or Mesos+Kubernetes.

The big advantage of docker-compose is the possibility to launch multiple services at once, in a manner that interconnects well. It has the big advantage of containers to be set apart from interering with the system, with the addition of possible links between containers.

Here I show an excerpt of an application behind a reverse proxy that handles certificate generation and TLS security settings in one place. It is especially important since you cannot start by rewriting maintaining each and every container to support both certificate generation and follow the latest cipher suites preferences. With one reverse-proxy handling that (here the docker-aware traefik), I manage the security parameters in one place (sepecifically, in the traefik.toml file).

version: '2'

services:

proxy:

image: traefik

ports:

- "80:80"

- "443:443"

- "8080:8080"

volumes:

- "/docker/traefik/traefik.toml:/etc/traefik/traefik.toml"

- "/docker/traefik/acme:/etc/traefik/acme"

- /var/run/docker.sock:/var/run/docker.sock

restart: unless-stopped

labels:

- "traefik.frontend.rule=Host:a.example.net"

- "traefik.port=8080"

- "traefik.backend=traefik"

- "traefik.frontend.entryPoints=http,https"

networks:

- traefik-portainer

portainer:

image: portainer/portainer

volumes:

- "/var/run/docker.sock:/var/run/docker.sock"

restart: unless-stopped

labels:

- "traefik.frontend.rule=Host:b.example.net"

- "traefik.port=9000"

- "traefik.backend=portainer"

- "traefik.frontend.entryPoints=http,https"

networks:

- traefik-portainerSecurity measures for the shipyard §

With the above containers technology (Docker), applications are compartimented and set appart from each other, but would they be compromised they often suffer from inferior conception of the docker images: processes that run root inside the container most of the time. Then a compromised docker image can both write on mounted volumes and use its network access, or try to escape containerization and compromise the docker service itself. Resulting counter-measures:

- (long term) rewrite images so that they use non-root processes

- (short term) run the docker service as an unpriviledged user

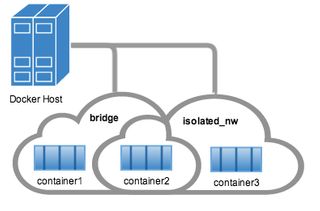

- (short term) run the docker images in separate networks (with

docker network create) that you only share between applications of the same stack - (long term) run the docker images with tailored AppArmor profiles (to protect mounted volumes)

- (long term) run the docker images with tailored seccomp profiles (to protect system calls)

A network for each software stack

That's probably the easiest one. You just need to create one per minimal working set of containers that need each other. They do not require to be contiguous, and can perfectly cross, as shown in the docker documentation:

Bear in mind that there are two ways to declare networks in the v2+ docker-compose file:

networks:

frontend:

# Use a custom driver

driver: custom-driver-1networks:

default:

external:

name: my-pre-existing-networkThe former generates the network if it doesn't exist, and the latter only connects to pre-existing networks.

AppArmor/SecComp security §

Both AppArmor and SecComp require to be loaded at boot by your kernel. It means that if you likely will have to reboot. So beware to interrupt services for maintenance, and pre-emptively check on a local VM. But we always do that before pushing to production, right?

AppArmor profiles can be somehow dreadful to write and understand. Hopefully there exists multiple projects that aim at simplifying the way to write them. Bane is one such project, which generates AppArmor profiles based on simple templates that you can manually write and test with its application with proper feedback.